A few months ago, I wrote a post on the neuroscience of vegetative states (read it here). This week, I was completely blown away by Martin’s story reported on NPR’s new show, Invisibilia (listen here). This is a powerful story illustrating the incredible ability of the human brain to change and heal.

neuroscience

Seeing the light

Imagine being in your early thirties, married and excited about what life has in store for you. One day, you start to notice that your peripheral vision is not as good as it once was. You have a hard time detecting things that are not right in front of you; objects and people start to appear very blurry; reading and driving become impossible. After consulting your doctor about this problem, you are told that you have a genetic condition called retinitis pigmentosa. There is nothing that can be done, and eventually you will lose your sight completely. You will just have to adjust to this new way of life.

In the eye, the cells that convert light signals from the external world into neural signals are called photoreceptors (the rod and cone cells in the diagram below). They sit at the back of the eye and detect light as it hits the retina. Once they detect this light, they have to translate it into a language the brain can understand, crucial for transmitting visual information to the brain. Retinitis pigmentosa causes degeneration of these rod and cone cells, and therefore leads to the breakdown of the interface between the world (light) and the brain. Without these cells, information about a visual scene becomes stuck and unable to get through to the brain to be processed; the brain cannot interpret visual information, eventually leading to blindness.

(Jamie Simon, Salk Institute for Biological Studies)

Larry Hester was diagnosed with retinitis pigmentosa more than 30 years ago, but recently, he was able to see light again for the first time in many years. Researchers at Duke University implanted a device with many tiny electrodes that could essentially bypass the damaged rods and cones to send information to the brain. Here is how it works: Larry wears glasses with a video camera attached, which sends a signal about what Larry is looking at through a tiny wire to a computer attached to his belt. The computer receives the information and relays it to the electrodes on the retina, which activates the cells that project into the brain. The device is translating the light information into neural activity, giving Larry the ability to experience and interpret the image he is looking at. At a basic level, the device is able to perform the function of the photoreceptors that have died.

See Larry’s amazing experience here: http://www.wimp.com/bioniceye/

It is important to note that this technology is new and limited, giving Larry only a blurry version of the world. Despite this, I have no doubt that the technology and its use will continue to improve rapidly, inching closer and closer to giving people normal vision. Larry says it is his dream to be able to see his wife’s blue eyes again. Although he is not able to achieve this with his current implant, at least he is one step closer.

Dr. Paul Hahn, an Assistant Professor at the Duke Eye Center, points out that we are entering an era of medicine where, for the first time, instead of just watching as patients lose basic abilities such as vision or movement, we can start to build devices that will help restore those abilities. What an exciting new time!

It is often the case that researchers can get discouraged by costly failures and uncertain obstacles, but this is the important work that serves as a necessary reminder that every once in a while, when things work out, it is actually possible to give someone back the gift of sight.

Citations:

Price, Jay. “Duke Fits First Patient in State History with ‘bionic Eye'”Newsobserver. N.p., 10 Sept. 2014. Web. 19 Oct. 2014.

http://www.newsobserver.com/2014/09/10/4140145/duke-fits-first-patient-in-state.html

Stereotype This

On a crisp fall evening of November last year, a young woman was heading home after a night out with friends. In the early hours of the morning, she reportedly sped down the street and hit a parked car. Confused and discombobulated, she wandered to a nearby house in search of help, and after banging on the door, she watched as it opened to a man behind the screen. He was standing there with his shotgun raised towards her. Before she could say anything, he shot her in the face.

The facts of this story make it particularly shocking and horrific, but it is made more complicated by the fact that Renisha McBride was African American and her killer, Theodore Wafer is white.

Some scholars have argued that racial bias has declined due to strengthening of egalitarian social norms, but anyone who tries to argue that racism is a thing of the past is not seeing the full picture. It is a complex and deeply ingrained problem in our society today that has been made all the more clear for me since moving to Chicago. I hear it in the personal story of a friend who was faced with the question “do you belong here?” in the elevator of his own building; I see it in reports of the persistent tragedy of gun violence in South Side Chicago; I read it in the news cycles about Trayvon Martin and Michael Brown; story after story demonstrating hatred and prejudice, on all sides.

We live in a world in which displaying outward prejudice towards others is no longer socially acceptable. We know it’s not ok, and most people try to behave in ways that do not imply obvious prejudicial feelings. We think we know ourselves and how we will react in a given situation, but what if our brains hold beliefs that we are not consciously aware of? And if this is the case, how could we even detect these hidden beliefs?

We can turn to neuroscience for answers.

Modern neuroscience has the ability to probe the nervous system in ways that go beyond our reported and conscious experience. It can detect processes that are going on underneath the surface, and doing so might help us better understand why people who sincerely believe they are not racist might still make racist decisions in the heat of the moment.

Let’s start at the beginning: looking at a face.

Our eyes pick up information about how that face reflects light. You might think that once this information reaches the eye, it gets sent into the brain to be processed and interpreted. While this is partially true, the story is a little more complicated.

Face Area in the brain

There is an area in the brain that is specialized for interpreting faces. When the wolf sees the attractive lady face, his own face area activates (the orange blob in the back of the brain, above the ear).

Research has shown that this area activates more in response to members of one’s own racial group (your ingroup) when compared to other racial groups (your outgroup). As early as 170 milliseconds after seeing a face, this difference shows up in electrical activity coming from this face area. The solid line (in the figure below) reaches a lower point, representing an increased response to people within an individual’s group.

This evidence suggests that at the very earliest stages in processing a face (.17 seconds after!), information about social group membership may be influencing our response at an unconscious level; therefore, visual perception is not just a direct sequential export of information from the eyes to the brain, but instead a complicated mixture of the perceived subject and a whole lifetime of previous experience and cultural exposure. It would be like having an unusual camera; instead of taking an objective picture representing items in the world, it would produce a doctored image that put some parts of the image in focus and make others blurry based on previous pictures taken.

The Amygdala

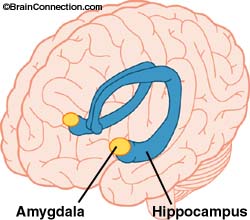

We also see racial differences emerge in a small structure in the brain called the amygdala, an almond shaped cluster of cells that are thought to be involved in memory, decision-making and emotional reactions.

Jaclyn Ronquillo and her colleagues carried out a study where they flashed photos of faces to participants and measured brain activity. The faces were either light or dark versions of white or black individuals (see examples below). They found that darker skin tones elicited a greater amygdala response (“white dark”, “black light”, and “black dark” bar graphs labeled below), which they explained as a heightened fear response. Unexpectedly, this trend also shows up in African-American subjects viewing darker skin tones, suggesting that the amygdala may be responding to cultural exposure rather than just ingroup/outgroup categories.

These two brain regions, the face area and the amygdala, seem to be responding to slightly different things. The face area activity is related to familiarity with the face (have you seen this face before?); the amygdala response seems to be more of a learned fear response based on previous experience with the world. These research studies reveal some potential sources of the bias that is able to hide in the depths of the brain.

If these biases exist, how are we able to appear unbiased?

Humans must constantly navigate a social world, and they need to play by the rules of the culture to be accepted. People must try to curb any unwanted influences of implicit stereotypes, an action that seems to involve a brain region that detects internal conflict: the anterior cingulate cortex (ACC). Activity in this part of the brain has been associated with a person’s motivation to respond without prejudice. During times of internal conflict, the ACC will activate, helping to hide our bias not just from the outside world, but also from ourselves!

So we are left with a battle in the brain.

A brain faced with overt questioning regarding race may have the cognitive control to produce the socially acceptable answer (through ACC involvement); however, processes that bypass conscious awareness and produce implicit bias may still be able to influence behavior when decisions are not carefully evaluated.

How does this help us understand what happened to Renisha McBride?

Is it possible that Theodore Wafer, stumbling to his door in the middle of the night, committed this terrible atrocity because of the signals coming from his face area or amygdala? Could this have been prevented if his ACC had kicked in to regulate his behavior?

People are convinced that they excel at seeing things objectively and without bias, but stories like this one and neuroscientific data tell us otherwise. The brain is constantly filling in the blanks with past experience, making shortcuts that help us sift through infinite amounts of information every day. Gaining a deeper understanding of the biological basis of racism can better arm us against allowing it to influence the decisions we make every day.

What can we do when these shortcuts do more harm than good?

The first step comes from being aware that implicit bias exists, and it is able to influence behavior in ways we may not be aware of. Knowing that our nervous system may be working behind the scenes in ways we don’t directly experience is the first line of defense.

Research also suggests that we can change these supposed automatic responses by developing a different framework and redefining the boundaries of the ingroup. The automatic bias response is eliminated when the definition of a group is shifted. This could be a shift from focusing on racial boundaries to focusing on members of one’s own sports team. When an individual sees a face as a fellow teammate rather than a member of a different racial group, she no longer displays a different response to racial groups that are not her own.

In this country, prejudice against African Americans is one clear example of ingroup/outgroup mentality, but this concept can be applied to any of the many conflicts seen around the world. Whether it is a line drawn regarding race, religion, or gender, all the overarching concepts stay the same.

This is a complicated issue that is deeply ingrained in history, culture and politics, but I truly believe that understanding the role the brain plays in social prejudice and stereotyping is a crucial step towards decreasing and ultimately eliminating them.

Sure, but how is this relevant to me?

Not many people will be faced with a stranger on their porch in the middle of the night or required to make a snap judgement while holding a gun; however, these principles are highly relevant in any kind of workplace environment as well. Check out this great video that google produced about implicit bias.

We need to take responsibility for understanding what assumptions we make on a day to day basis, and shift the way we view others. Ask yourself, who is in my group and could these lines be changed? Can a group simply be fellow city-dwellers, leaving race out of it? What would the world look like if everyone became more aware of the biases they have?

I’d love to hear your thoughts on this!

Citations:

Lieberman, M.D., et al., An fMRI investigation of race-related amygdala activity in African-American and Caucasian-American individuals. Nat Neurosci, 2005. 8(6): p. 720-2.

Ratner KG, Amodio DM (2013) Seeing “us vs. them”: Minimal group effects on the neural encoding of faces. J Exp Soc Psychol 49: 298–301. doi: 10.1016/j.jesp.2012.10.017

Ronquillo, J., Denson, T.F., Lickel, B., Lu, Z., Nandy, A., & Maddox, K.B. (2007). The effects of skin tone on race-related amygdala activity: An fMRI investigation. Social Cognitive and Affective Neuroscience, 2, 39-44.

Sugar Head

In 2003, Dr. Robert Lustig saw a six year old Latino boy in his clinic in Salinas, California. Juan weighed 100 pounds and was wider than he was tall, but his mother insisted he ate a healthy diet. When Dr. Lustig began to look more closely, Juan’s diet did seem ok for a growing boy; however, through his directed questions, Dr. Lustig found the somewhat unexpected culprit: orange juice. Juan had gotten into the habit of drinking an entire gallon of orange juice per day. This is approximately 1,760 calories and 336 grams of sugar, which is over 1 cup of sugar. Additionally, the amount of dietary fiber: 0 grams. Why was Juan doing this? Because the government provided it to his family for free, and his mother had no idea how harmful it could be to her son’s health. In fact, she felt that the more he drank, the more nutrients he was getting.

Although obesity has received a lot of media attention in the last few years, I was still floored by some of the statistics in Fed Up, a documentary I recently watched (and highly recommend). I was surprised that the focus was around the role sugar plays in the growing epidemic, a definite shift from the more common topics of saturated fat and exercise. The numbers get a little scary:

In the United States, it is estimated that 93 Million Americans are affected by obesity.

Kids watch an average of 4000 food-related ads every year (10/day).

A 20-ounce bottle of soda contains the equivalent of approximately 17 teaspoons of sugar.

It will take a 110-pound child 75 minutes of bike riding to burn off the calories in one 20-ounce bottle of soda.

In 2012, Americans consumed an average of 765 grams of sugar every 5 days, or 130 pounds each year.

We all know that the Western Diet, characterized by high saturated fat and refined sugar, has been implicated in a range of diseases such as type 2 diabetes, cardiovascular disease, and stroke, but do we know whether and how this diet is affecting our brain?

We start the quest for an answer to our question with a small neural structure called the hippocampus, named after the greek words hippos and kampos meaning “horse” and “sea monster” respectively. This is because of the structure’s unusual shape and appearance. Do you see the resemblance?

Much work has been done to try to understand this structure (and it happens to be the one that Henry Molaison had removed in the 1950’s-see About Gray Matters for more on this story). It is heavily implicated in memory, and more specifically, important for those memories involving spatial cues such as remembering how to navigate around your house. Because the hippocampus contains unusually large cells, it is particularly vulnerable to environmental insults, including things such as diet.

What protects the hippocampus from environmental toxins?

The blood brain barrier is a crucial component of the nervous system. It serves as a gate keeper who “decides” what unwanted components of the blood must stay out and which nutrients will be allowed in, protecting the brain from harmful substances.

If you think about a football stadium with entrances surrounding its periphery, the blood brain barrier would be like the people checking tickets. Only those with tickets would be allowed in. Now think about what might happen at the super bowl if many of those ticket checkers were just not there. People without tickets would start hopping over the barriers and entering the stadium to view the game for free. This is what happens when there is damage to the blood brain barrier: unwanted substances get into the brain.

So how does this answer our question about how Western Diet affects the brain?

Recent research has revealed two important trends:

1. Western Diets seem to stimulate intestinal production of a protein found in the body called amyloid-β.

2. Elevated levels of amyloid-β proteins have been shown to damage the blood brain barrier in rats.

Why does this matter?

One of the hallmark signs of a brain diseased by Alzheimer’s is the presence of plaques, particularly in the hippocampus. These plaques can be thought of as dangerous junk, cluttering the cell and disrupting normal function, and they are partially caused by the accumulation of amyloid-β proteins. Starting to see how this story is coming together?

Based on the current evidence, we can start to form a theory: A Western Diet increases bodily levels of amyloid-β protein, leading to increased amyloid-β levels in the blood. This elevation could contribute to blood brain barrier damage (equivalent to losing those ticket checkers!), resulting in more amyloid-β (ticketless individuals) being able to get into the brain. Once in the brain, these proteins can damage the large cells in the hippocampus, which are particularly vulnerable. The theory is laid out nicely in this diagram:

(1) Western Diet results in (2) elevated levels of amyloid-β (Aβ) from the small intestines, (3) thus increasing Aβ levels in the vascular system. (4) High levels of Aβ contribute to blood brain barrier damage, (5) which leaves the hippocampus (HPF) vulnerable to damage by Aβ.

So this leaves us with the possibility that obesity and dementia potentially share a common contributory factor: overconsumption of foods high in saturated fatty acids and simple sugars, or the Western Diet. Given the statistics about the prevalence of obesity in this country and others, the possibility that these people are also at a higher risk for future dementia is incredibly concerning.

Although not many people drink a gallon of juice every day, I have to admit that before reading Dr. Lustig’s book and watching Fed Up, I went out of my way to drink orange juice because I thought it was healthy for me. I did not realize how much sugar was in it! Now I just eat the fruit. I hope this will be better for my body and my brain in the long run.

For more information about the role sugar plays in the obesity epidemic, I recommend:

1. Fat Chance: The bitter truth about sugar by Robert Lustig

2. Fed Up Documentary (more information found here: http://fedupmovie.com/#/page/about-the-issue?scrollTo=facts)

Citations:

http://www.telegraph.co.uk/foodanddrink/10238549/Is-fruit-juice-bad-for-your-health.html

Quick Review: Parkinson’s Disease

In 1817, a book called An Essay on the Shaking Palsy was published in which James Parkinson describes 6 patients who all demonstrated a similar collection of symptoms. He described the individuals as having increased tone in their muscles, slow and reduced movements, a stooped posture and a resting tremor. Decades later, famous french neurologist Jean-Martin Charcot coined the term “Parkinson’s Disease” and the description of the disease in medical textbooks remains much the same today (although additional symptoms involving altered cognitive abilities, problems with impulse control, sleep disturbance, vision abnormalities and others have also been included).

What do we know?

We know that in Parkinson’s Disease, cells that secrete the neurotransmitter dopamine in the depths of the brain die, affecting systems that control body movement, eye movements, emotional and cognitive functions. By the time someone starts to show motor symptoms, it is estimated that as many as 40-60% of these cells are already gone, and it is thought that the brain is able to compensate for their loss up until this point. Although scientists are still exploring the cause of this cell degeneration, one thing we do know is that when we look at these dopamine secreting cells from someone with Parkinson’s disease under a microscope, we can see what we call Lewy bodies. This is the brown blob seen inside the brain cell below. Lewy bodies can be thought of as junk and signal bad cell health.

What is not known?

Scientists are still working towards a complete understanding of Parkinson’s Disease. You may wonder how, if we have had a consistent description of the symptoms for hundreds of years, is there still so much that we don’t know. Take this example:

A few years ago, a 58 year old man, who had suffered from Parkinson’s for over 10 years, went to see Dr. Bastiaan Bloem in the Netherlands. This man’s brain had been ravaged by the disease and by the time Dr. Bloem saw him, he was barely able to walk on his own. When he tried to walk, often he froze in one place unable to even take a step or if he did, he could only take a few before falling over. But this man told Dr. Bloem something amazing, “Yesterday I rode my bicycle for 10 kilometers.” Dr. Bloem was in disbelief, and it was only after seeing it with his own eyes that he could start believing it.

Watch the video here: https://www.youtube.com/watch?v=aaY3gz5tJSk

It is still unknown why someone who is barely able to stand and walk on his own is able to smoothly ride a bicycle. Maybe the feedback the individual is getting from the pedals is allowing consistent movement initiation (something not present while walking) or perhaps riding is using a different part of the brain than walking. There have been reports of people with Parkinson’s Disease being able to dance, run, walk smoothly when given specific emotional or visual cues. It is still not clearly understood why this occurs, and more work is needed on how the motor system works to be able to explain these strange reports.

Are there treatments?

The most common treatment for Parkinson’s Disease is a drug that increases concentrations of dopamine in the nervous system called L-Dopa; however, more recently a surgery that implants an electrode into the depths of the brain has become more popular. This is called deep brain stimulation, and it has revolutionized treatment options for people diagnosed with Parkinson’s, especially those with early onset.

Check out this video that shows the amazing effects of deep brain stimulation for a patient with Parkinson’s Disease: https://www.youtube.com/watch?v=uBh2LxTW0s0

I have been absolutely fascinated by how well this surgery can work for some people and also the most important detail: neurosurgeons and neuroscientists still don’t really understand why placing an electrode in this one spot in the brain even has this effect!

This is just a very brief overview of some of the things I find most interesting about research on Parkinson’s Disease. There is a wealth of information out there, and some people spend their whole careers studying it. With the advancement of technology that allows us to sequence genes, stain cells, and image whole brains of living people suffering from this disease, we are inching closer to better treatments, and maybe even one day, a cure.

Citations:

http://en.wikipedia.org/wiki/History_of_Parkinson%27s_disease

http://www.nytimes.com/2010/04/01/health/01parkinsons.html?_r=0

Who me?

Imagine you are a young woman, feeling relieved that finally, at 3am, you are done with your shift at Ev’s Eleventh Hour Sports Bar in Queens, New York. You drive home to your city apartment building and park in the lot across the street. As you walk towards your door, you hear someone behind you. Your heart jumps into your throat as you see the man quickly approaching you. You start to run to a nearby intersection. Before you make it, you are stabbed twice in the back and scream out “Oh my god, he stabbed me! Help!” with all your remaining strength. A neighbor yells out “Let that girl alone!” and you think you are safe as your attacker runs away from you. You crawl towards your apartment door in search of help, but it’s locked. You lay there feeling weak and tired.

Unfortunately, this is the fate that met Catherine Genovese, the eldest of five children in a middle-class Italian American family from Brooklyn in March of 1964.

As she lay in the alley next to her building, Winston Moseley, her attacker, returned. He proceeded to stab her, rape her and then steal $50 before leaving her in a hallway. The whole attack spanned 30 minutes. Following the second attack, the police were called, but she died en route to the hospital. Later reports revealed that about a dozen people witnessed (in some way) a part of the attack including the man who had yelled out and scared Moseley away the first time. But if so many people heard her screams or saw her crawling in the street, why hadn’t anyone done something?

Did these neighbors not know what to do or did they just assume that someone else would call the police? One neighbor later admitted that they “didn’t want to get involved.”

This is a very famous incident that served as a key example of something known as the bystander effect. Social pyschologists have established that the presence of other people in a critical situation actually decreases the likelihood that someone will help. In their seminal work on the topic, John Darley and Bibb Latané, researchers at Columbia University, identified some possible explanations to explain this behavior.

1. Diffusion of responsibility: people tend to divide the responsibility by the number of bystanders. The more people there are, the less each individual will feel compelled to act.

2. Evaluation apprehension: people are fearful of others judging them while acting publicly. They are afraid that they will make a mistake or do the wrong thing with others watching.

3. Pluralistic ignorance: people tend to rely on others when navigating an ambiguous situation.

In a study carried out after Ginovese’s brutal attack, Darley and Latané wanted to see if this effect would play out in a laboratory setting. They recruited male Columbia University students with an ad for taking part in a survey about campus life. When they arrived at the experiment, they were shown into a room where they were told to fill out a preliminary questionnaire. While in this room, subjects were either:

1. alone

2. with two other subjects

or 3. with two researchers (pretending to be subjects).

While they were filling out the questionnaire, experimenters slowly introduced (harmless) smoke through a small vent in the wall. The smoke flowed in freely and after several minutes, it became so thick that vision was obscured. The researchers then watched behind a one-way window to see how the 3 groups would respond and to test whether those responses were different.

What do you think the results were?

When the subject was alone, he would typically see the smoke, get up and walk to the vent, sniff it, wave his hands in it, feel the temperature, and then after a short period, leave the room to look for someone to report it to. When the subject was in the room with two researchers who were instructed to behave indifferently to the smoke, only 1 in 10 reported it. The other 9 subjects remained in the room, determinedly working on their questionnaires, coughing and waving the smoke out of their eyes! In comparison to the response rate of the alone condition (75%), only 10% of subjects in this condition reported the smoke. Finally, when all 3 bystanders were naive, of the 24 people run in 8 groups, only 1 person reported the smoke within the first 4 minutes before the room became highly unpleasant!

Why does this happen?

In an exit interview, most subjects reported the smoke when asked if they experienced any difficulty with the questionnaire. They had all noticed it. Subjects who had reported the smoke during the experiment consistently reported that they thought it was “strange” and “it looked like something was wrong.” Subjects who had not reported the smoke during the experiment mainly all had decided that it was not a fire and had come up with some kind of alternative explanation for its presence. Some were convinced it was steam or a side-effect of the air conditioning unit. Two people even reported that they thought it was “truth gas” introduced to get them to answer the questions truthfully (the researchers report that they were shocked that these subjects did not seem disturbed at all by this belief).

Perhaps the subjects felt that having other people present increased their ability to cope with a fire. It is also possible that subjects in the group conditions aimed to appear more brave when others were watching; however, the researchers make a different argument. As they discovered in follow up interviews, it was not that subjects were unworried about the fire or willing to endure danger. It was that the subjects that did not report the smoke claimed that they had decided that there was no fire at all; the smoke was caused by a harmless source, a decision that was highly susceptible to social influence.

In the news reports of Catherine Genovese’s murder, reporters blamed the apathy that grips people who live in big cities. Darley and Latané conclude that the failure to intervene may be better explained by understanding the relationship among bystanders rather than that between a victim and a bystander.

I have always found this social psychology work fascinating and hugely informative and applicable to how I navigate the world. Knowing the traps and pitfalls that your brain can unknowingly fall victim to is the first step towards avoiding them. Here we see how the presence and behavior of others can influence an individual’s response in an emergency situation.

So, if you ever find yourself in an emergency situation (and I hope you don’t), try to remember the lessons we have learned from Catherine Genovese and think about whether or not you want to remain passive.

Citations:

http://listverse.com/2009/11/02/10-notorious-cases-of-the-bystander-effect/

http://en.wikipedia.org/wiki/Murder_of_Kitty_Genovese

http://psycnet.apa.org/journals/bul/137/4/517/

Click to access Group%20Inhibition.pdf

Quick review: Epigenetics

In the winter of 1944 to 1945, the Dutch experienced a horrific famine in the German occupied parts of the country that would come to be known as Hongerwinter (“Hunger Winter”). By February 1945, the adult rations in the west consisted of about 580 kilocalories per day, and by the end, more than 22,000 people had died.

As you might imagine, this also affected the health of the people who suffered through it but survived. The children of women who were pregnant at the time were lighter and shorter than those whose mothers had not suffered through the famine; however, what was unexpected was that the children of those children, the grandchildren of the women who lived through the famine, experienced poorer health in later life. How can this be if their own mothers had a healthy food intake during pregnancy? The answer may be found in a relatively new field of genetics: epigenetics.

What is epigenetics?

So we all remember the basics of DNA (maybe?). A double helix composed of amino acids (A,T,G,C) attached to a sugar and phosphate backbone that contains all your genes.

You use these genes as blueprints that tell your body how to make the proteins that are essential to life. If you think about your DNA as a giant library, each gene would be one book with a set of instructions on how to make a specific protein. But all these books take up space. If you put each book on the floor one next to the other, you’d need an unreasonable amount of space to store them all. We need a more efficient storage mechanism. The solution is that the books are stacked and each stack is placed very close to the next, but at the cost of not having all books readily available for reading at all times. In the Berkeley Library, we had the stacks of books pushed together with no space between the stacks to walk in and get to the books. If you needed a specific book, you’d have to turn a crank at the end of the stack to make enough space between them to walk in and access the desired book.

DNA organization has a similar concept. Each cell contains 1.8 meters of DNA, but when wound up into a structure called chromatin, it is only .09mm! The DNA is wound around proteins called histones (cream colored blobs in picture below) like yarn wrapped around a ball. When the DNA needs to be accessed to make a protein, the structure has to be unwound so the specific DNA sequence can be read.

We also have a process called methylation which adds a methyl group to DNA. I like to think about this as a sort of annotation system the body keeps to remember which genes to express or not, a sort of bright red sticky note that marks the books and pages that are particularly important or blocks off those that are not so important. Methylation helps the cell keep track of which genes to express and which ones are ok to be inaccessible in storage.

So how does this relate back to our story of the famine? How can a woman’s health be affected by her grandmother experiencing hardship?

Histones and methyl groups are important in determining how the DNA gets used and which proteins are made in each cell, aka, how the genes are expressed. These annotations can be transferred when cells divide, replicating the evidence of the environment an individual has experienced. This reveals a potential explanation for the grandchildren’s poorer health outcomes. Experiencing the famine may have changed the way the DNA was annotated and this information could have been passed on to future generations.

This is epigenetics: the study of changes in gene expression by environmental triggers that add (or remove) labels on DNA. The DNA sequence is not changing; how the genes are expressed is. This is a fascinating new avenue of study that will help inform us in combating diseases such as cancer and schizophrenia, and may even help us put to rest the old question of nature vs. nurture!

Citations:

http://www.how-to-draw-cartoons-online.com/dna-sequencing.html

http://www.biology.emory.edu/research/Lucchesi/html/research.html

Is there anybody in there?

Imagine you wake up one day and while slowly emerging from the groggy haze of sleep, you go to stretch your arms; nothing happens. You try to move your legs, wiggle your toes; nothing happens. You try to look around but you seem to only be able to stare at the ceiling. You try to call for help but still nothing. A wave of fear and panic surges through you. Snapping awake in an instant, you realize that your whole body is entirely paralyzed and that you have somehow ended up in the hospital. This is the experience that befell French fashion magazine editor, Jean-Dominique Bauby in 1995 when he suffered a massive stroke. All his mental faculties remained intact despite being completely paralyzed and unable to speak. He was left with just one ability: to blink his left eyelid. With this sole connection from his mind to the outside world, he was able to write his memoir purely through blinking his eye in a sort of morse code dictation. Needless to say, the book is short (although I highly recommend it!).

But what happens to people who are not left with any connection at all? What if they are completely unable to move, stranded within their own mind? What if they are still in there but they have no way of showing it, never able to find a bridge of communication? Until we consider such a catastrophe, we cannot really comprehend how vital movement is to our everyday functioning. When it is lost, the lines that define that which makes us conscious human beings begin to blur. Now imagine that someone has not retained 100% of their cognitive functioning and they cannot move. Doctors today would assess whether this individual can make any purposeful or voluntary response to various external stimuli and search for signs of any awareness. If nothing could be observed, the person would be deemed in a vegetative state. Recovery would be thought to be unlikely. But does this diagnosis tell the whole story?

In 2005, a 23 year old woman suffered severe trauma to her brain in a terrible car accident. Five months later, she remained unresponsive and was diagnosed as being in a vegetative state. Some time after this, Dr. Adrian Owen (no relation) now at Western University in Canada started to work with her. He wanted to find out whether he could use neuroimaging techniques to assess her level of consciousness (if any remained) in a new way. While in an fMRI scanner, the woman was given one of two instructions: either

1. imagine playing tennis, or

2. imagine walking through your home.

Characteristic and distinguishable patterns of neural activity are known to occur in healthy people when given one of these instructions. The tennis imagery reliably activates a region called the supplementary motor area (SMA); the spatial task of walking through one’s home reliably activates other regions such as premotor cortex (PMC), posterior parietal cortex (PPC), and parahippocampal regions (PPA).

So what would it mean if this woman, who was thought to have no remaining awareness, could selectively modulate her brain activity to perform the given task? What would it mean if the neural pattern shows that she could hear and respond to directions? The image below shows the results from the study. The top panel shows the characteristic responses elicited from a healthy individual. The bottom panel displays what was observed in the patient. Pretty similar, huh?

To follow this work, Dr. Owen took it a step further. He started working with a man who had suffered severe head trauma in a car crash in 1999, at age 26. When clinically evaluated, he could not open his eyes or produce any sound, and over the next 12 years his condition remained unchanged as persistent vegetative state. In 2012, this young man had his first MRI scan where the same instructions were given to either imagine hitting a tennis ball or think about navigating around his house.

When his activity turned out to be roughly similar to that of controls (as in the previous example), researchers realized that this paradigm could be used to gain more information about the young man’s inner mental capabilities. The distinct neural responses could be used to signify answers to yes/no questions. When asked a question, imagining playing tennis would signify a “yes” response; imagining walking through the house would signify a “no” response. Since the two neural patterns are clearly different, the answer could be clearly detected, but would he be able to answer verifiable questions correctly?

If asked “Is your name John?” (the correct answer was yes), he would have to imagine playing tennis to signify an answer of “yes”; if asked “Is your name Mike?”, he would have to imagine navigating his house to signify that his name was not Mike. This task requires sustained attention, working memory, language comprehension and knowledge of the answer to the question, abilities traditionally thought unlikely to remain for someone in a vegetative state.

The researchers questioned the young man using this technique. They wanted to assess whether he retained basic knowledge about the world by asking him if a banana was yellow. They wanted to know if he knew who he was, so they asked him his name (John or Mike). They wanted to know if he had orientation in time and space, so they asked him what year it was (1999 or 2012), and where he was (in a hospital or a supermarket). They also wanted to know whether he had acquired any new information since the accident. Finally, to determine this, they asked him what his caretaker’s name was (It was Sarah, see below for this result). They successfully obtained answers to 12 different questions using this approach.

Based on these results, it seems that this man was able to show evidence of focused attention, language comprehension, and working memory. He was able to provide information about personal preference (did he like watching hockey on TV) as well as his bodily state (was he in pain). Despite these astonishing findings, his diagnosis of being in a persistent vegetative state was not technically incorrect as it is based on his ability to produce observable behavior at the bedside. These results left his diagnosis unchanged, yet, this clinical assessment does not accurately capture his inner mental capabilities. So where does this leave us?

Of course, these examples are exceptions. They should not be used as evidence that all vegetative state patients exist in a horrific prison of the mind without the ability to communicate due to paralysis. It is more complicated than that. In a study of 54 patients in either persistent vegetative state or minimally conscious state, only 5 showed evidence of being able to modulate their neural activity in this way, and some skeptics would argue that this small number are not showing true evidence of consciousness. More work is clearly needed to smooth out the wrinkles of these first unusual observations to determine where vegetative state sits on the spectrum of consciousness. That having been said, this patient has persisted in a vegetative state for over 12 years, qualifying him as being in a permanent vegetative state. Because of this, it is possible that he could become the subject of a legal petition to withdraw life support (nutrition and hydration). Would this new imaging work influence such a decision?

The determination of a vegetative state diagnosis is made by searching for external observable behaviors, the only signs that clinicians can currently detect at the bedside. The work of Dr. Owen demonstrates that advances in imaging technology might allow us to peek inside the brain of a person in such a vegetative state and observe his or her internal neural activity. Could this new internal behavior count as an observable behavior to be included in a diagnosis consideration? Although it is not my goal to insert my personal beliefs here, I think this neuroscience suggests that we need to think carefully about what it really means for someone to be classified as being in a vegetative state.

The linguistic implications of the word “vegetative” are that people in this condition have the same mental capabilities as a vegetable: none. This work suggests that perhaps in a small subset of patients, this may not be the case.

All of this naturally brings up questions regarding quality of life of these patients, and the early potential of communicating about it using imaging techniques. If you had a family member who had a clear order to turn off life support in such a circumstance, would you want these tests done first? Should they be included in diagnosis? How should this early research be communicated to family members in the most sensitive and ethical ways? Dr. Owen and his team are working through some of these issues in some fascinating recent publications. My purpose here is not to make any kind of philosophical arguments about how these very special patients should be viewed or treated, but rather to provide some science to help inform your own beliefs on the subject.

Owen believes that one day perhaps we will perfect this approach to assess whether a patient in a vegetative state has desires, and the use of neuroimaging in this area has the potential to greatly enhance diagnosis accuracy and treatment outcomes. We could also use it to communicate with these individuals as Jean-Dominique Bauby used blinking his eye as his sole access to the external world. Although there are still scientists who remain skeptical of this work and claim there is no evidence of consciousness, Owen responds with, “in the end if they say they have no reason to believe the patient is conscious, I say ‘fine, but I have no reason to believe you are either’.” I will look forward to see where this work takes us in the future.

Citations:

http://www.nature.com/nrn/journal/v14/n11/full/nrn3608.html

http://www.sciencemag.org/content/313/5792/1402.abstract

http://www.nejm.org/doi/full/10.1056/NEJMoa0905370

http://www.nature.com/news/neuroscience-the-mind-reader-1.10816

Welcome to Gray Matters!

Henry was a seemingly normal boy growing up in Hartford, Connecticut in the 1930’s. After a bicycle accident in childhood, he started to suffer from terrible seizures. Despite being on high levels of anti-convulsant medication, these seizures became severely debilitating into adulthood. You can imagine it might be hard to hold a job when you could start foaming at the mouth, biting your tongue and convulsing uncontrollably at any minute. As a solution to this, his neurosurgeon decided to drill into his skull and remove a melon ball sized chunk of neural tissue from the back of each side of his brain. Although the surgery succeeded in the goal of preventing seizures, it left Henry with a strange problem. For the rest of his life, he could no longer form new memories. If you have ever seen the movie Memento (and if you haven’t, you should), it was like the condition the main character suffers from. He could no longer remember when he had eaten; he would read the same magazine over and over again; he had to leave himself countless notes about everything. Every day, his doctor would have to introduce himself as if they had never met before, and he would react with the same distress each time he was informed that his father had died many years before. Despite this strange condition, Henry’s IQ was above average, and he was able to show evidence of other types of learning. He just could not remember having learned it. Henry Molaison died in 2008, and he is possibly one of the most studied and fascinating individuals in the history of neuroscience.

Over the last decade or two, the field of neuroscience has exploded. After the great success of the human genome project, many people considered the brain to be the final frontier, the last unexplored territory and even the holy grail of science. I became fascinated by the subject in college during my Introduction to Cognitive Science class where I was introduced to examples of strange neurological cases like Henry. I read about people who lost the ability to recognize faces, who could no longer perceive motion, or who had developed uncharacteristic obsessions with drawing or religion after brain injury. I became intrigued by how that clump of cells in your head gives rise to everything one experiences as well as the bizarre and surprising consequences that pop up when things go wrong. Since college, I have whole heartedly pursued the subject not only in my career but in my free time as well.

I have been known to devour books by Oliver Sacks and Eric Kandel and rave to people about the stories in them. I have become transfixed by stories of religious auditory hallucinations in temporal lobe epilepsy or a man who became obsessed with piano music after being struck by lightning. There is the example of the autistic savant, known as “the living camera,” who is able to draw the entirety of Rome from memory after flying over it in a helicopter, perfecting details down to the number of windows on each major building. The brain is a powerful and mysterious organ, and these stories of its strange intricacies will always fascinate me.

My academic training as a Neuroscientist requires that I complete a highly specialized project, which takes up the majority of my time, but the rigorous coursework, teaching and independent study required in the earlier years of the PhD have given me something arguably more valuable. They have taught me to speak science. Yes, science can often seem like it is its own language. I remember reading academic journal articles when I first decided to dive into research and thinking to myself “this may as well be written in french!” (a language that I definitely do not speak). After years of dedicated perseverance, I have transformed into a novice speaker of neuroscience. I now have the ability to read most any kind of journal article and understand the gist of what the scientists did and why it matters.

Once I reached this level, I discovered a whole body of work that often shifts my perspective on life. I am constantly amazed by new research and hot avenues of exploration in the field. When faced with conflict as a child, my mother always told me that I had a choice, that I could get upset or choose to do something more useful. At the time, I was not able to escape the grips of powerful emotion and dismissed the advice as empty words aimed to comfort, however, as I’ve grown up and used neuroscience to inform my approach to conflict, I have been able to better see the insight in her advice. I suppose I’m a bit biased, but I truly believe that knowledge of the emerging findings in neuroscience can inform your every day life, and how you view the world. It can empower you and allow you to see things more clearly. Charles Darwin said, “the highest possible stage in moral culture is when we recognize that we ought to control our thoughts.” I have found this incredibly powerful, and I want to share it with you.

Of course, the fun is always in sharing ideas and discussing them with others; however, with science, often times the language barrier becomes a problem and those who do not speak the language get left out. Academic papers contain jargon and require background knowledge, becoming inaccessible to those without it. I have sat through countless lectures at top universities and international conferences where I became lost and had to go back and find ways to teach myself the material. At first, I assumed it was because I was just not as bright or not able to keep up, but over the years I realized it was mainly due to bad teaching and ineffective communication. When I went through the material in a more accessible way, I realized how exciting it was. If only the lecturer had just said that!

My goal with Gray Matters is to shed this barrier completely by translating the science into more accessible nuggets that people can appreciate. I want to share my enthusiasm and show people why this subject is so amazing! Over the last few years, I have gained experience teaching neuroscience spanning from explaining a sheep’s brain to a fascinated 10 year old to demonstrating cell respiration to a high schooler to teaching cranial nerves to advanced graduate students. Despite the various ages and levels of my students, one thing always remains the same: the science needs to be translated. I have found that simple drawings, explanations and analogies have helped tremendously, and I hope to use these tools to communicate the newest and hottest topics in the field.

My aim is to make Gray Matters accessible to people from any background. I have friends who work in areas such as marketing, tech and even opera singing, but there is not one person who hasn’t found something I’ve told them about neuroscience relevant to their field and/or just plain cool. To my artistic friends, I send articles about creativity, genius, musical hallucinations, and using art in recovery; to my tech friends, I send articles about new devices, innovative technologies and the future of communication; to my doctor friends I send articles about the importance of treating the mind and body, new case studies and connections between the brain and other organs; to my friends in banking, I advise them to read Daniel Kahneman’s work and be cautious to avoid the pitfalls of loss aversion. There is something for everyone! Now, instead of pummeling friends and family with these little specialized tidbits, I thought I’d find a place to write them all down. That way, people can read them at their own leisure (or choose to opt out completely!). I hope that this blog will enrich your neuroscientific knowledge and that you can take it with you in your future endeavors , whatever they may be.